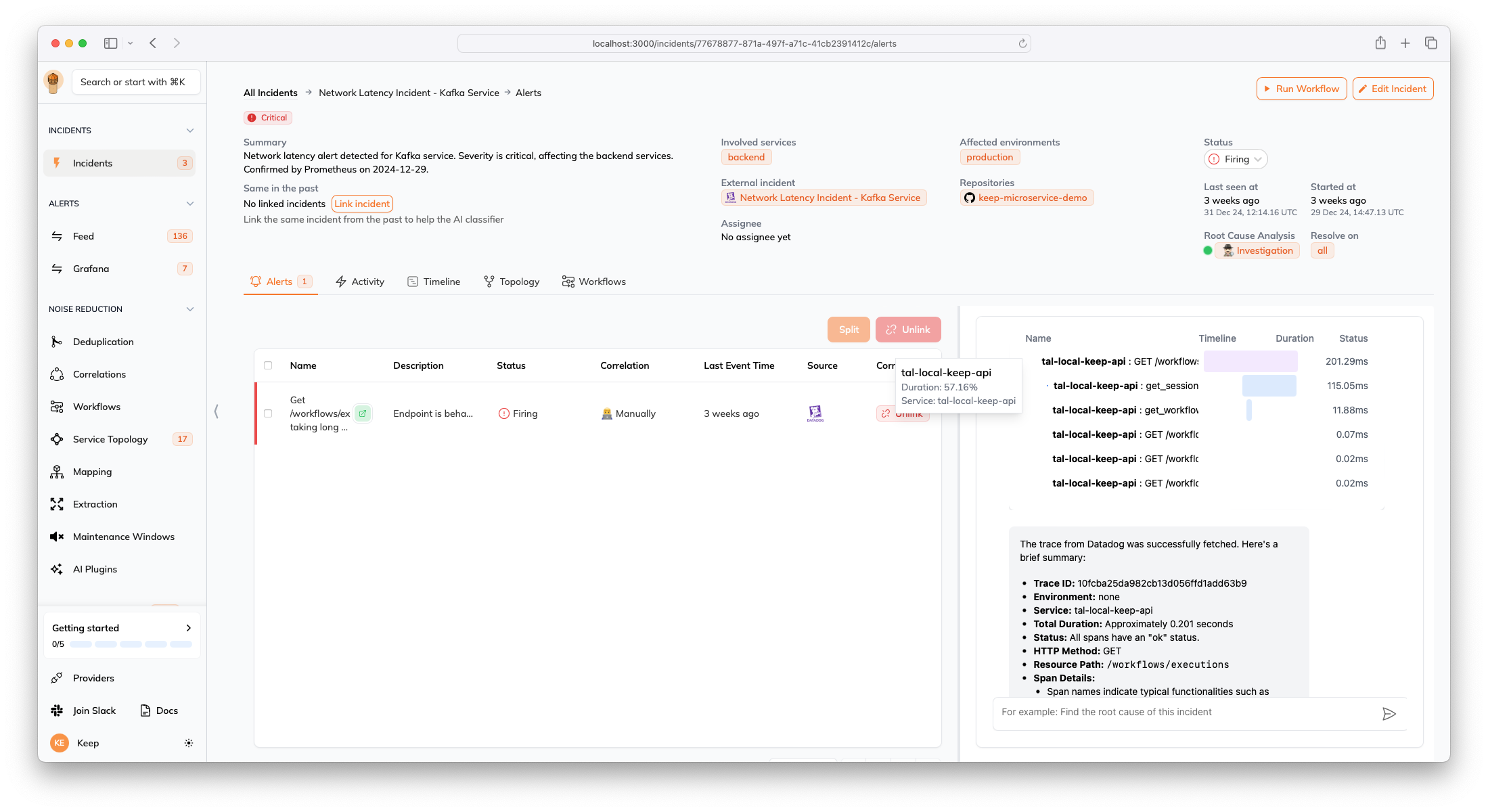

The AI incident assistant is a chat feature embedded in the incident page. It streamlines all incident context—including alerts, descriptions, and impacted topology—to the LLM, helping on-call engineers gather information faster and resolve incidents more efficiently. Users can ask for root cause analysis and even execute commands on third-party services (read more about provider methods).Documentation Index

Fetch the complete documentation index at: https://docs.keephq.dev/llms.txt

Use this file to discover all available pages before exploring further.

Frequent questions:

Model used: OpenAI, a model hosted by Keep, or other.Data flow: Data is shared between LLM provider and Keep whether the LLM provider may vary depending on the contract.